Executive Summary

The most common criticism of using Monte Carlo analysis for retirement planning projections is that it may not fully account for occasional bouts of extreme market volatility, and that it understates the risk of "fat tails" that can derail a retirement plan. As a result, many advisors still advocate using rolling historical time periods to evaluate the health of a prospective retirement plan, or rely on actual-historical-return-based research like safe withdrawal rates, or simply eschew Monte Carlo analysis altogether and project conservative straight-line returns instead.

In this guest post, Derek Tharp – our Research Associate at Kitces.com, and a Ph.D. candidate in the financial planning program at Kansas State University – analyzes Monte Carlo projection scenarios relative to actual historical scenarios, to compare which does a better job of evaluating sequence of return risk and the potential for an "unexpected" bear market... and finds that in reality, Monte Carlo projections of a long-term retirement plan using typical return and standard deviation assumptions are actually far more extreme than real-world historical market scenarios have ever been!

For instance, when comparing a Monte Carlo analysis of 10,000 scenarios based on historical 60/40 annual return parameters to historical returns, it turns out that 6.5% of Monte Carlo scenarios are actually worse than even the worst case historical scenario has ever been! Or viewed another way, a 93.5% probability of success in Monte Carlo is actually akin to a 100% success rate using actual historical scenarios! And if the advisor assumes lower-return assumptions instead, given today's high market valuation and low yields, a whopping 50% to 82% of Monte Carlo scenarios were worse than any actual historically-bad sequence has ever been! As a result, despite the common criticism that Monte Carlo understates the risk of fat tails and volatility relative to using rolling historical scenarios, the reality seems to be the opposite – that Monte Carlo projections show more long-term volatility, resulting in faster and more catastrophic failures (to the downside), and more excess wealth in good scenarios (to the upside)!

So how is it that Monte Carlo analysis overstates long-term volatility when all criticism has been to the contrary (that it understates fat tails)? The gap emerges because of a difference in time horizons. When looking at daily or weekly or monthly data - the kind that leveraged investors like hedge funds often rely upon - market returns do exhibit fat tails and substantial short-term momentum effects. However, in the long run - e.g., when looking at annual data - not only do the fat tails entirely disappear, but long-term volatility actually has a lack of any tails at all! The reason is that in the long-run, returns seem to exhibit “negative serial correlation” (i.e., mean reversion – whereby longer-term periods of low performance are followed by periods of higher performance, and vice-versa). Yet by default, Monte Carlo analysis assumes each year is entirely independent, and that the risk of a bear market decline is exactly the same from one year to the next, regardless of whether the market was up or down for the past 1, 3, or 5 years already. In other words, Monte Carlo analysis (as typically implemented in financial planning software) doesn't recognize that bear markets are typically followed by bull markets (as stocks get cheaper and eventually rally), and this failure to account for long-term mean reversion ends out projecting the tails of long-term returns to be more volatile than they have ever actually been!

The bottom line, though, is simply to recognize that despite the common criticism that Monte Carlo analysis and normal distributions understate “fat tails”, when it comes to long-term retirement projections, Monte Carlo analysis actually overstates the risk of extreme drawdowns relative to the actual historical record – yielding a material number of projections that are worse (or better) than any sequence that has actually occurred in history. On the one hand, this suggests that Monte Carlo analysis is actually a more conservative way of projecting the safety of a retirement plan than "just" relying on rolling historical returns. Yet on the other hand, it may actually lead prospective retirees to wait too long to retire (and/or spend less than they really can), by overstating the actual risk of long-term volatility and sequence of return risk!

Monte Carlo Analysis And Relying On A Normal Distribution

While already included in most financial planning software solutions, Monte Carlo analysis remains a somewhat controversial projection tool for financial planners, due to the fact that it commonly relies on a normal distribution to project the probability of future returns in a world where many have suggested that returns are not actually normally distributed. Which raises the question of whether or to what extent Monte Carlo analysis projections might be understating the risk of a retirement plan?

To evaluate this question, it helps to first revisit what the normal distribution is, and how it actually works.

The normal (or Gaussian) distribution is one of the most commonly used distributions in both natural and social sciences. From a statistical perspective, there are many neat and useful characteristics of a normal distribution, including the fact that distributions of many naturally occurring phenomena—such as human height, IQ scores, and measurement errors—can be approximated with a normal distribution.

One of the most common ways advisors use normal distributions is in Monte Carlo simulations. A Monte Carlo simulation models future outcomes by randomly selecting returns, based on the likelihood that they occur – where the “likelihood” is quantified by the average and standard deviation of a normal distribution, which recognizes that extreme returns (to the upside or downside) are less common.

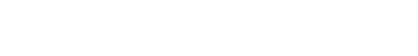

For instance, if an advisor wants to conduct a simulation assuming a 5% average return and 10% standard deviation, the following normal distribution would be produced:

As you can see in the graphic above, the most common return that would be selected randomly is 5%, while more extreme values would come up less frequently. Specifically, in a normal distribution, 68% of the values occur within 1 standard deviation of the mean (in this case, a return between -5% and +15%), 95% of the results are within 2 standard deviations (e.g., -15% to 25%), and 99.7% of the values fall within 3 standard deviations (which would be returns from -25% to 35%). In other words, given the parameters above, returns greater than 35% or less than -25% (i.e., more extreme than 3 standard deviations), would only be expected to occur about 0.3% of the time.

However, as noted earlier, a leading criticism of Monte Carlo analyses is that “extreme” returns can occur more often than the 0.3% frequency implied by a normal distribution – in other words, the “tails” of the distribution are “fatter” (i.e., more frequent) than what a normal distribution would project, particularly to the downside (i.e., a catastrophic bear market). Although, notably, the extent to which market returns have “fat tails” depends on the time horizon involved. While many studies have shown that daily and monthly stock returns appear to have fatter tails, when projected annually (as is common in financial planning projections), the downside fat tails largely disappear.

This lack of “fat tails” in long-term annual stock returns also holds true for 60/40 portfolio returns, based on the large-cap U.S. stocks and Treasury Bills. Using Robert Shiller’s data going back to 1871, we can use a Shapiro-Wilk test to examine whether annual returns exhibit a statistically significant deviation from a normal distribution – and the findings suggest they do not. In other words, while there may be “fat tails” in the short-term (daily or monthly) return data, it averages out by the end of the year.

Comparing Historical Returns And Monte Carlo Projections

Notwithstanding the lack of fat tails evident in the data for annual-return retirement projections, many financial planners eschew Monte Carlo analysis and prefer to simulate prospective retirement plans using actual historical return sequences, which, by definition, capture actual real-world volatility (potential fat tails and all) that has occurred in the past. In fact, the entire origin of Bengen’s “4% rule” safe withdrawal rate was simply to model retirement spending through rolling historical time periods, identify the worst historical scenario that has ever occurred, and use that as a baseline for setting a “safe” initial spending rate in retirement.

We can get a sense of whether or to what extent Monte Carlo analysis understates long-term tail risk relative to actual historical returns by actually comparing them in side-by-side retirement projections.

Data available from Robert Shiller, going back to 1871, provides a sequence of actual historical returns (for large-cap U.S. stocks, and Treasury Bills) that we can use to evaluate the impact of “real-world” market volatility on retirement, and then compare it to what a Monte Carlo analysis projection would show using the same average return and volatility as that historical data.

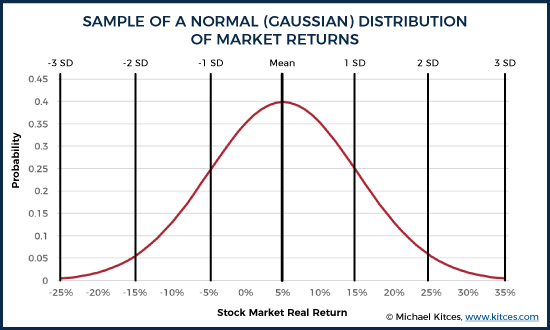

If we assume a 60/40 portfolio of stocks and fixed income (annually rebalanced), and a portfolio that begins at $1,000,000 (to make the numbers round and easy), the starting point is to look back into the initial withdrawal rate that would have “worked” for any particular rolling 30-year period (based on actual historical return sequences). Accordingly, we find that in the worst-case scenario the “safe” spending rate was $40,766 at the beginning of the first year (with spending adjusted each subsequent year for inflation). This equates to a 4.08% initial withdrawal rate (relative to the starting account balance), reaffirming Bengen’s 4% rule.

Notably, though, most of the time a 4.08% initial withdrawal rate is unnecessary. If we assume that the retiree always takes that $40,766 of initial spending and adjusts each subsequent year for inflation, we end up with the following range of wealth outcomes.

In the chart above, the worst 30-year sequence in history (beginning in 1966) is indicated in red. For that one worst-case scenario, the retiree still makes it to the end (but just barely), thus necessitating that 4.08% initial withdrawal rate. In all the other scenarios, though, the 4.08% safe withdrawal rate is actually “too” conservative, and the portfolio finishes with sometimes very substantial (inflation-adjusted) wealth left over at the end.

In fact, the retiree actually dies with more inflation-adjusted wealth than they had entering retirement in over 50% of scenarios by using that 4% initial withdrawal rate! And in 30% of scenarios, retirees would have died with nearly 200% of their initial inflation-adjusted wealth or more! In nominal terms, this means a retiree who entered retirement with $1,000,000 would have died with $4,000,000 (or more) 30% of the time, even after taking an inflation-adjusted annual distribution each year (assuming an average inflation rate of 2.5%).

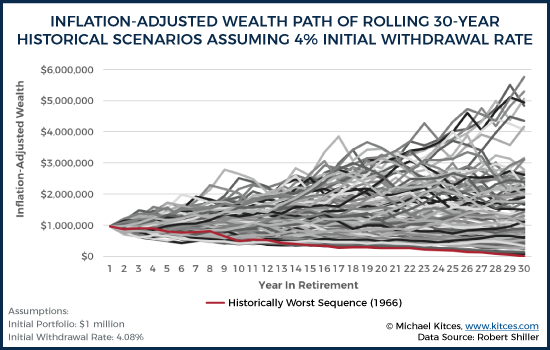

Given these results based on the actual historical record, we can then re-evaluate the same retirement time period, using the same historical return data, by running a Monte Carlo analysis that uses the average return and standard deviation of the annual returns that underlie this historical time period. In this case, the data from 1871 to 2015 show that the annually rebalanced 60/40 portfolio had an average annual real return of 5.9%, with a standard deviation of 11.2%.

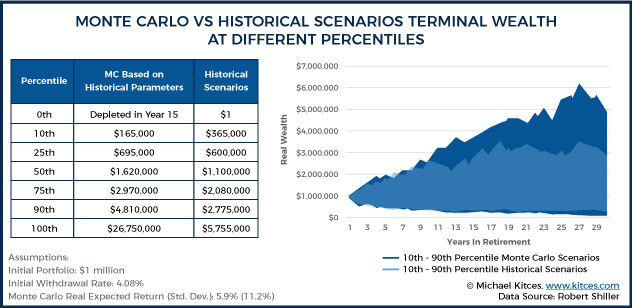

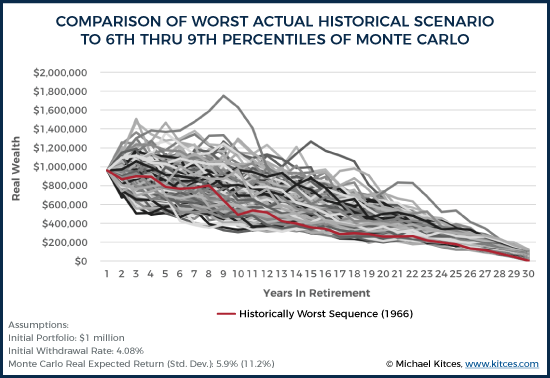

Using these real return and standard deviation inputs, the chart below shows various percentiles outcomes of a Monte Carlo analysis with 10,000 iterations. To see how “bad” the worst Monte Carlo scenarios are at a 4.08% initial withdrawal rate, these percentile outcomes are compared to the actual historical return sequences (which includes the historical worst-case scenario in 1966, which necessitated the 4.08% initial withdrawal rate in the first place).

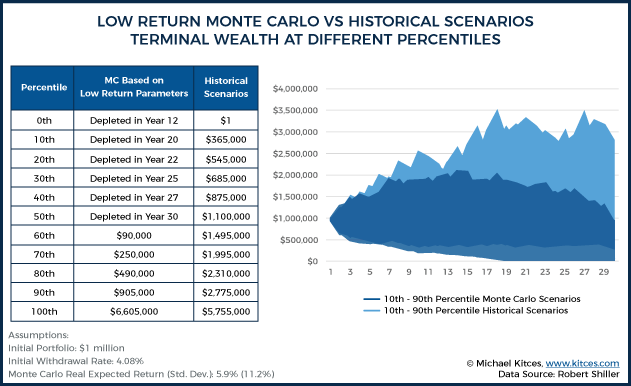

As the chart reveals, the best and worst Monte Carlo scenarios (0th and 100th percentiles) were actually far more extreme than any actual historical best or worst scenario. With the Monte Carlo analysis, the worst-case retiree scenario ran out of money as early as only 15 years into retirement, even though the same spending rate never actually ran out in any of the 114 rolling 30-year historical scenarios. Conversely, under the best Monte Carlo scenario, the retiree died with nearly $27 million in real wealth, even though the best case historical scenario finished with “just” $6 million of inflation-adjusted wealth at the end. The following chart summarizes the ending real wealth values at various percentiles.

The key point is that, despite the common criticism that Monte Carlo understates volatility relative to using rolling historical scenarios, the reality, at least relative to historical scenarios, is actually the opposite – the Monte Carlo projections show more long-term volatility, resulting in faster and more catastrophic failures (to the downside), and more excess wealth in good scenarios (to the upside). In fact, the 0th percentile historical scenario (worst case scenario, running out of money at the end of 30 years) was actually the 6.5th percentile in Monte Carlo, while the 50th percentile historically (finishing with $1.1M remaining) was the 37th percentile in the Monte Carlo projection, and the 100th percentile (best case scenario) historically was “just” the 93.7th percentile result with the Monte Carlo projection.

Of course, it shouldn’t be surprising that Monte Carlo comes up with more extreme best and worst-case scenarios, given that it’s based on 10,000 trials (while the historical data has only 114 series of overlapping data points), and a higher volume of random trials should yield results that are more extreme.

Nonetheless, the point remains: plans that succeed in 100% of historical scenarios may only be projected to succeed 95% of the time in Monte Carlo, suggesting that the problem may not be that Monte Carlo is understating risk, but that it is being overstated given the frequency that Monte Carlo projects scenarios that are more extreme than those that have been experienced in the past! (As it wasn’t just a 1-in-10,000 Monte Carlo scenario that was more extreme than the historical record… instead, it was 650-in-10,000!)

The Gap Between Monte Carlo And Reality: Long Sequences Without Mean Reversion

To understand why there is such a gap between the Monte Carlo results and the actual historical scenarios, we can delve further by looking at the actual sequence of real returns that underlies each.

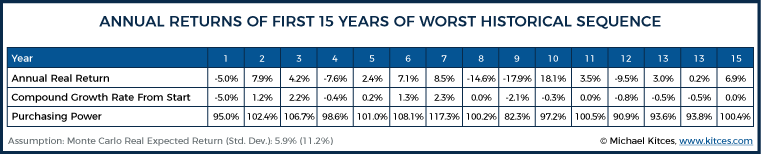

First, let’s examine the initial 15 years of real returns of a 60/40 portfolio starting in 1966 (the worst retirement starting year of the last century):

As the chart reveals, this worst-case historical 30-year sequence didn’t get off to a great start (as you’d expect, given the impact of sequence of returns). After 10 years, the average annual compound growth rate of the portfolio was negative. Which means, after accounting for inflation (and without even considering taxes or fees), a retiree with a 60/40 portfolio had already gone backward in inflation-adjusted terms. And this is before considering the impact of distributions themselves (i.e., these are time-weighted returns, not dollar-weighted). At the end of the entire 15 years, returns had still gone nowhere in real terms, and the portfolio was merely treading (inflation-adjusted) water.

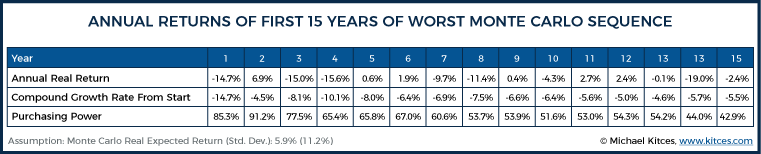

By contrast, though, the sequence starting in 1966 was a walk in the park compared to the worst Monte Carlo scenario:

0% real returns start to sound good after looking at how extreme the worst-case Monte Carlo results were! 10 years in, the retiree has experienced a -6.6% compounded real return, which, in annualized form, somewhat masks how bad these returns truly are. In real dollars, this retiree’s portfolio would have lost roughly 50% of its purchasing power in the first decade, and by year 15, it would only buy roughly 43% of its original value! And that’s before the impact of the withdrawals themselves!

Notably, the extremes within the year-to-year annual real returns of the two scenarios are roughly comparable. The historical sequence included 14.6% and 17.9% declines, while the Monte Carlo scenario included 19.0% and 15.6% declines. What differs, though, is the sequencing and frequency of negative returns – specifically, the way in which the Monte Carlo scenario continues to string together multiple bear markets without ever having a recovery.

In the historical scenario, the 2-year decline in years 8 and 9 (which represents the 1973-74 bear market) was followed by an 18.1% rebound. In the Monte Carlo scenario, though, the portfolio started out with a substantial market decline of 14.7%, had “just” a 6.9% rebound, then experienced a 1973-74 style decline in years 3 and 4… after which there was a less-than-3% rebound, followed by another 2-year bear market. And then, after mostly flat returns for 5 years, there was another bear market. And thus, by the end, the Monte Carlo scenario eroded a whopping 57% of its real purchasing power, even though the worst actual historical scenario merely broke even on purchasing power after 15 years (which admittedly is still a horrible sequence compared to a long-term real return that averages closer to 5%/year!).

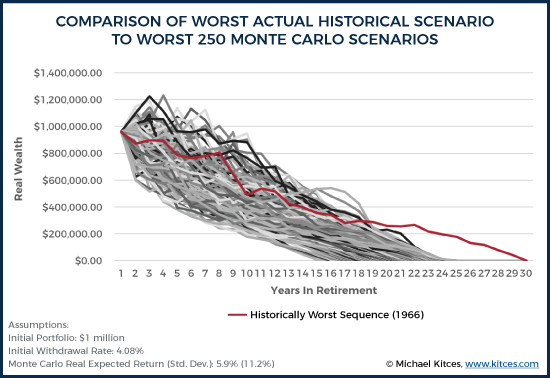

And notably, this phenomenon isn’t unique to just the worst-case Monte Carlo scenario. If we look at the worst 250 Monte Carlo scenarios (i.e., the worst 2.5% of the 10,000 trials), all of them end up with an ongoing series of extreme negative returns with little-or-no rebounds, such that they all deplete in 15-24 years. Yet again, the actual worst-case historical scenario with this spending rate still lasted for 30 years.

It’s not until we move up to the worst 650 to 900 Monte Carlo scenarios (i.e., the 6.5th to 9th percentiles) that we even find Monte Carlo results “as bad” as the actual historical record. Or, viewed another way, a 93.5% probability of success in Monte Carlo was the equivalent of a 100% success rate in the historical record!

Still, even in these Monte Carlo trials, the fact that Monte Carlo analysis does not consider the natural rebound effects of markets remains evident. For instance, consider the standout iteration above that jets off by itself to the upside, approaching $1.8 million in year 8 above. In this case, the scenario started off with astonishingly strong returns for a 60/40 portfolio, as real returns were 21%, 20%, 9%, 2%, 4%, 9%, 10%, 13% over the first 8 years, which is an average annual compound real return of 10.8% for 8 years! Yet this scenario still finishes in the bottom 10% overall, because the next series of returns were equally improbable in the other direction, with the sequence of -5%, -11%, -27%, -25%, -17%, 18%, -15%, -4%, -14%, over the next 9 years, which equates to a -11.9% compounded real return for 9 years… or a 68% real decline in 9 years, far worse than even the worst actual 9-year sequence in history!

In other words, the gap that is emerging between Monte Carlo and historical market returns may not just be due to the fact that 10,000 Monte Carlo scenarios produce the potential for more extreme market declines than just 114 actual 30-year rolling historical scenarios. Instead, another distinction may be that with actual market returns, markets tend to at least pull back after several years of strong returns and to rebound after a crash. Yet, in the most extreme Monte Carlo projections, they often just keep rising or declining in dramatic fashion, regardless of how expensive or cheap the stocks are getting.

Tail Risk, Momentum, and Mean Reversion In Stock Market Returns

Mathematically, Monte Carlo analysis assumes that each year’s returns are entirely independent of the prior year(s). In other words, whether the prior year was flat, saw a slight increase, or a raging bull market, Monte Carlo analysis assumes that the odds of a bear market decline the following year are exactly the same. And the odds of a subsequent decline in the following years also remains exactly the same, regardless of whether it would be the first or eighth consecutive year of a decline!

Yet, a look at real-world market data reveals that this isn’t really the case. Instead, market returns seem to exhibit at least two different trends. In the short-run, returns seem to exhibit “positive serial correlation” (i.e., momentum – whereby short-term positive returns are more likely to be followed by positive returns, and vice-versa), and, in the long-run, returns seem to exhibit “negative serial correlation” (i.e., mean reversion – whereby longer-term periods of low performance are followed by periods of higher performance, and vice-versa).

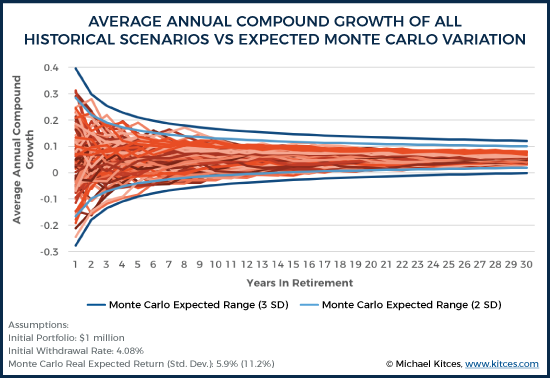

Because Monte Carlo projections are long-term projections spanning multiple years (or decades), it is the “negative serial correlation” (i.e., mean reversion) which may cause the “tails” of Monte Carlo projections to actually be more volatile and extreme than anything in the historical record. In other words, because most Monte Carlo analyses don’t account for mean reversion, this specific aspect of Monte Carlo projections will actually tend to overstate tail risk (not understate it!).

For instance, the chart below shows the average annual compound growth rates (in real returns) of all scenarios in the historical record, and compares these to what Monte Carlo analysis would predict at the 2 standard deviation and 3 standard deviation levels of compound returns, simply using an (annually independent) normal distribution based on the historical mean and standard deviation. If fat tails were present in the returns of a 60/40 portfolio, the most extreme historical records should fall outside of the 2 standard deviation Monte Carlo bands about 5 percent of the time; yet, as the results appear to reveal, over 30-year time periods, historical markets have never fallen outside the 2 standard deviation thresholds, indicating long-term returns may actually exhibit less volatility than Monte Carlo analysis would predict! Which, in turn, means that Monte Carlo analysis may actually overstate the tail risk in long-term returns!

Do Monte Carlo Retirement Projections Overstate Or Understate Tail Risk?

In the case of highly leveraged hedge funds, the reality is that it doesn’t matter if returns are mean-reverting in the long run, because a margin call can end a highly leveraged investor in a single day or week (as occurred in the famous case of Long-Term Capital Management). However, financial planning projections typically do rely on annual data (with annual withdrawals), not daily (or weekly or monthly) data. And, in the annual context, these kinds of extreme events are actually just a short-term distraction that vanish in long-term data! Instead, the problem is actually that Monte Carlo analysis may be overstating the dangers of failure by overestimating the frequency of long-term tail risk (in addition to overstating the potential upside in good scenarios)!

And this distinction is especially important given the popular tendency of financial advisors to reduce long-term return assumptions as a way of adjusting for Monte Carlo’s perceived understatement of tail risk. Recent academic research, such as the 2017 article Planning for a More Expensive Retirement by David Blanchett, Michael Finke, and Wade Pfau, urges that it is important to consider the planning consequences of forward-looking real portfolio returns in the 0% to 2% range (compared to a historical average of 5.9% for a 60/40 portfolio).

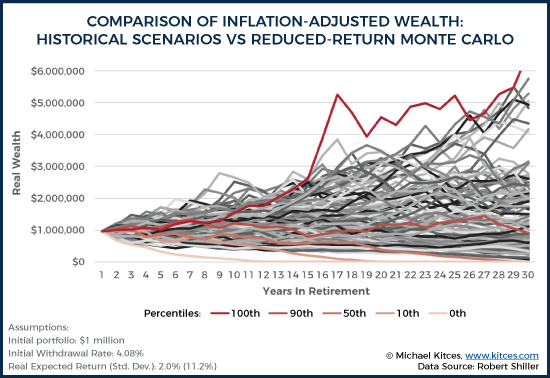

To explore the potential impact of these reduced return assumptions, we can evaluate 10,000 new Monte Carlo simulations using the same standard deviation (11.2%) but a lower mean real return (2%), and then compare the Monte Carlo results to actual historical scenarios.

As the results show, when long-term real returns are reduced to just 2%, then 50 percent of all Monte Carlo trials end up being worse than anything that has ever actually happened in history. In other words, assuming 2% real returns in Monte Carlo analysis may imply there is a 50% probability of a long-term path worse than the Great Depression or the stagflationary 1970s! The full comparison at various percentiles is included below.

And for those who assume the low end of Blanchett, Finke, and Pfau’s spectrum – with real returns at 0% – then Monte Carlo analysis would show that 82% of Monte Carlo trials are worse than anything that has ever happened in US history!

Notably, this doesn’t mean that the alternative of ignoring today’s low yields and high valuation is better. But it is important to understand the full impact of reduced return assumptions in a Monte Carlo analysis, particularly recognizing that Monte Carlo analysis already projects more long-term tail risk by not accounting for mean reversion. And, thanks to mean-reversion, it may be the case that even reduced 10-year returns don’t necessarily imply dramatic reductions to 30-year returns, though the sequence remains important. Ideally, Monte Carlo analysis tools would allow a combination – such as reduced real returns for 10 years, followed by normalized returns with mean reversion – but, unfortunately, no financial planning software is yet built to provide such regime-based Monte Carlo projections.

The bottom line, though, is simply to recognize that despite the common criticism that Monte Carlo analysis and normal distributions understate “fat tails”, when it comes to long-term retirement projections, typical Monte Carlo assumptions actually overstate extreme outcomes relative to historical returns due to the failure to account for mean reversion – yielding a material number of projections that are worse (or better) than any sequence that has actually occurred in history. Or, viewed another way, Monte Carlo analysis may actually project worse returns than those underlying the 4% safe withdrawal rate research, and choosing a Monte Carlo analysis with a 95% probability of success may unnecessarily constrain a retiree's spending by failing to account for the reality that bear markets do eventually fall enough that stocks get cheaper and begin to recover!

Which means, perhaps it’s time for financial planning software to do a better job of either allowing advisors to do retirement projections showing actual historical return sequences, or better show what the range of returns really are within a Monte Carlo analysis, in order to help prospective retirees understand just what a 95% Monte Carlo “probability of success” really means!

So what do you think? Do you still believe Monte Carlo analyses understate tail risk… or overstate it? Is it possible that utilizing low return assumptions just overstates tail risk further? How do you set Monte Carlo parameters for your clients? Please share your thoughts in the comments below!

The Flexible Retirement Planner should be able to model this idea of ‘Regime based projections’.

You’d need to use the Additional Inputs feature and specify different return/std dev. values for each range of years (eg 1 return/std pair for the next 5 years, another for the 5 years after that, and so on).

I am not all that familiar with the FRP tool, but I second the notion that there may be ways to address this important issue beyond just being aware of it (as essential as that is!). Hasn’t some research been done on applying MC techniques with correlations between performance and volatility of different assets?

It has, but with approach I always worry that uncertainty and instability of those correlations. It’s very easy to do a lot of work that ends up adding more noise than signal.

This ‘Nerd’s Eye View’ post tackles this very topic:

https://www.kitces.com/blog/monte-carlo-correlation-matrix-investment-assumptions-retirement-planning-projection/

Agreed!

Carlo projections show more long-term volatility, resulting in faster and more catastrophic failures (to the downside), and more excess wealth in good scenarios (to the upside)!

I doubt it understates tail risk, underscore the “tail”. Remember we are really only dealing with 125 years of historical returns so we haven’t experienced the full distribution of possible 30 year returns. “For the first time in history” happens everyday so assume past returns will match priors at your own peril. That said, I will continue to pour money into equities because I have a very long time horizon of over 30 years. Without a doubt equities are the best bet but I am fully aware that returns could be very awful for a very long time.

Brutusrush, I share your sentiments about the caution we need to take extrapolating from a small amount of historical data and the usefulness of Monte Carlo for modeling a wider range of possibilities. I think an important question is whether we expect mean reversion to persist or whether we believe it is an anomaly that will go away in the future? I tend to believe the former, and I think there is a decent behavioral explanation for why mean reversion may persist. If we do assume mean reversion will persist, then the point I’m trying to make is that we should either acknowledge that traditional MC assumptions don’t account for mean reversion (and understand the further implications) or build mean reversion into the MC projections.

However, if we assume that mean reversion will not persist or want to test a plan against assumptions other than what we’ve seen historically (which may certainly be reasonable things to do) then these adjustments would not be needed. Ultimately, I’m not trying to say there’s one way to run projections, but just that we should understand all the implications of the methods we choose.

I’m not an expert in statistics and probability, so my thinking could be flawed. Based on what I’m reading, it seems to me that historical data does not support the serially independence of market returns. If that is true, then why should we prefer the results of a Monte Carlo simulation that assumes returns are serially independent. I’m not understanding why the use of a relatively small sample of real data would be any riskier than would be the use of a critical assumption which is unsupported by real data.

John,

Indeed, that’s a key point of the article. Markets do not appear to honor the underlying assumption of serial independence… due to momentum in the short term, and mean reversion in the long term. In the context of retirement projections, it’s the failure to account for mean reversion in the long run, and the (inappropriate?) assumption of serial independence that raises concerns about whether Monte Carlo overstates long-term risks.

– Michael

It just seems to me that if financial advisors were to strictly follow the results of the Monte Carlo analysis referred to above, they would be advising customers to reduce their standard of living by 25 or 35 percent – to prepare for a series of events which has never hapened in the history of U.S. market returns. Should they also advise those clients to remove additional funds from their investment portfolio in order to start stockpiling sterno fuel and dried food in their basement? In order to prepare for nuclear winter or for the eruption of the Yellowstone super volcano?

John, I think both sides make good points here. As you point out, using assumptions which are too conservative and have little historical support can result in clients unnecessarily restricting themselves and leaving too much behind (especially if they have low or no bequest motives). At the same time, I think it is important to remember that we have limited historical data and the future may look wildly different than the past. I think a key to putting together a solid plan for retirement is figuring out how to balance those perspectives, rather than being overly reliant on any one approach.

Derek,

I think it should be the retiree client who makes the decision about whether to use historical data or unsupported statistical assumptions in deriving a retirement plan. I do not believe that advisors who use the results of Monte Carlo simulations are informing or will inform their clients that the plan they developed may be overly conservative. My recent experiences with advisors revealed that advisors are more inclined to promote fear in their customers. The ones I have spoken with are not at all explaining the possibility of underspending assets.

I appreciate research you have done recently about withdrawal rates and longevity risk. I hope that financial advisors – or their employers – will pay attention to such research and not blindly adhere to a 3.5% or 4% “rule” just because it is safe for the advisor.

Unfortunately, retirees have proven to be of a mindset of conservation of assets at a rate much higher than they state in their objectives. The psychological impact of concerns that exist in the face of losing employment cash flow is very powerful causing the probability of passing a larger estate to heirs than originally intended. This is the impetus of another variable that is not accounted for. That variable being the probability of a shift in the client’s risk tolerance over time and that impact on lifestyle adjustments as a result in opposition to stated goals.

Barney,

I understand that no longer having a paycheck will influence our perception of the risks facing us. However, I also think that media reports of retirement financial research – and the misleading interpretation of that research by some financial advisors – have unnecessarily heightened those fears of most retirees. When retirees are told over and over again that they will run out of money if they spend more than 4% a year – with that rate reduced to 3% recently – why shouldn’t their risk tolerance shift?

John – What you describe is then a catch 22. If the retiree is advised then they are pushed to be too conservative. If the retiree is not advised then the nature of the beast is to be too conservative. My contention is that the advised are less conservative than the unadvised. The typical retiree has saved one to two years of pre-retirement salary and then is so concerned about shortfall that it is typical to avoid spend down altogether and instead live below their means. In pure hedonistic terms, if that gives them the most enjoyable retirement then good for them. My point is simply that it is better to plan and then work the plan which is best facilitated by being advised as opposed to independently all else being equal.

Barney,

I was certainly not referring to those you describe as “the typical retiree”. It is the retiree who has accumulated $500,000 or $1,000,000 or more that has been inadequately served by those advisors and writers who seem to overly promote the fear of outliving assets, IMO. I have sat across the table from two such advisors, and have heard the same story from other friends who have also accumulated sizable nest eggs.

I’m not sure I can blame individual advisors. They have been promoting what their industry has accepted as rules of thumb. My hope is that such advisors will follow the work of smart researchers such as Michael Kitces and Wade Pfau and others who continue to challenge and refine industry practices.

Great analysis. MCS analysis in context of client goals has to be about the range of outcomes and a discussion about implications on client objectives. Making the result meaningful and intuitively displayed for an adviser to explain and client to comprehend is the real trick.

great analysis

First off, I very much appreciate this article and support the conclusions that are drawn. I do have a somewhat related, and perhaps not insignificant question about the core assumptions, though.

Why should one (or “us” collectively) assume that automatically rebalancing back to the initial 60/40 recipe annually (as a basis for the data in this entire discussion) is really a good idea in the first place? If 40% of the initial capital is invested in bonds (less volatility than stocks, typically) then why not just spend from the bond side of things, maybe subject to a “low-ebb” balance of bonds reserves (expressed as a relative percentage of the portfolio – maybe 20%, 15%, 10%, etc.) rather then, during a “normal” duration downdraft on the equity side, systematically selling stocks from known and usually relatively “short-ish” period of unreliability (albeit often dramatic) position of weakness?

By my calculations, in a “typical” situation, the bond money could generally support the withdrawals for maybe 8-10-12 years. So to enhance the analysis, and further the discussion…. maybe a mini-Monte Carlo Simulation could be run on the bonds side alone to test the amount of money that needs to be in bonds to withstand the longest projected stock declines therefore letting the mean-reversion in stocks fully take advantage of the full allocation of stock money vs, the depleted balances that (I believe) would be present within the “systematically and pro-rata withdrawal regime that is the underpinning of these data analyzed in this very soundly researched and excellent discussion. I would be thrilled if this type of derivative tact on the question could be considered and analyzed with the same objective rigor as the corpus of this data has been reviewed. the re-balancing can take place in neutral and positive times for stocks (“sell high” – i’ll bet you’ve heard that idea before).

My intuition is that this “spend the bond money in poor times” sequencing concept would produce yet more surplus even beyond the illustrated case of Monte Carlo vs historical return comparison has already yielded. to be clear, I’m suggesting the two ideas are to be considered together, not one idea vs. the other as an analytical approach.

The above approach is how I implement my client funds already, yet I lack the time and tools to ‘prove it out” as well as you guys have been able to approach and compare the original question that spurred on the analysis.

The portfolio construction model and withdrawal sequencing protocol that I discussed above served my clients very well in 2000-2002 and 2007-2009,

I further welcome any thoughts and comments that you might bring forth.

Am I correct in thinking that the analysis assumes a static rate of inflation when considering distributions? I saw one part of the article that, in parentheses, attributed a 2.5% annual inflation rate. I didn’t see inflation mentioned/assumed anywhere else.

If so, that inflation assumption is a fly in this ointment. That’s just as flawed an assumption as using a static rate of return, is it not? Inflation rates have been highest, by a long shot, during flatter market periods (such as the mentioned 1966-1981 period). Using a 2.5% annual inflation assumption is bananas when considering such a period. Real inflation rates were WAY higher than 2.5% during that 1966-1981 period. Accordingly, and obviously, a 2.5% inflation assumption would result in a grossly overstated distribution capability.

Ideally, historical inflation would be used, in the order it occurred, in a distribution analysis, because history indicates very real correlations between choppy market return periods (like 1966-1981) and high inflation. Right?

Did I misread/”under-read” this article/analysis? Or is the static inflation assumption a major flaw when considering worst-case distribution analyses?

Jack, you are correct that adjusting for actual historical inflation makes a big difference. All of the historical scenarios here are in real terms, so they do make this adjustment. Because the Monte Carlo parameters were in real terms (5.9% real return and 11.2% standard deviation), there was no standalone inflation assumption for this model. The section that mentions an average of 2.5% inflation was just an attempt to make “200% inflation-adjusted ending wealth” a bit more concrete for readers, in order to illustrate just how well things actually went in over 30% of the historical scenarios, but the actual nominal values in the underlying historical scenarios would have been much different.

I suspect 100 years of Japanese data may show the Monte Carlo fat tails are real world problems to worry about….

Tim,

If you look at any country that was on the losing side of global war – e.g., Japan and Italy after World War II, or Germany after either WWI or WW2 – you see drastically worse sustainable withdrawal rates.

However, in all seriousness, I’m not sure that a Monte Carlo analysis is the best way to evaluate the risk that your retirement might be ruined because your home country loses World War 3.

– Michael

Michael, I should have said that I liked the analysis and totally agree that the standard Monte Carlo process with mean no mean reversion will overstate risk and that fat tails do seem to be a short term phenomenon. So I’m not much of a fan of the standard Monte Carlo process either.

Having said that, just looking at US data – where we know long term outcomes have been good – isn’t the harshest test going around. Japan in the late 80’s may be an interesting test – no war there. And then, there is the Donald…

Liked everything you said Tim. When you said “then there is the Donald” you’re implying more 20th century-like US returns right? 🙂

Great analytics. I believe that the Bergen study was too conservative .

Jim Cloonan’s book “Investing at Level 3” is based on 40% bonds is too big a price to pay for protection against volatility that is not that bad when it happens.

He will be happy to see your work

I think it is good enough to be your PhD thesis. Best of Luck, look forward to seeing more of you here………………..Gordon

Interesting piece Michael thanks.

My own view is that Monte Carlo (aka stochastic) simulations are flawed because of the central assumption upon which they’re based – namely that prices are at all times perfectly priced and thus the chances of movements in either direction are the same at all times.

If anyone seriously believes that the US Stockmarket, for example, was perfectly priced both in January 2000 and in 1982 then they’ll be happy to rely on Monte Carlos.

If they don’t, then they won’t. Simples ?

I see that you’re drawing on Shiller data – which is so useful.

Here’s his CAPE chart – which I think supports my point above.

https://uploads.disquscdn.com/images/b3272348a1cb740a8d4da8f6fd9df794b6c2decf21a696474fba8ee274aeca87.jpg

Paul,

Indeed, we’ve written extensively about using Shiller CAPE on this website as a means to adapt retirement planning recommendations and projections. See https://www.kitces.com/blog/shiller-cape-market-valuation-terrible-for-market-timing-but-valuable-for-long-term-retirement-planning/ from a few years ago, and https://www.kitces.com/may-2008-issue-of-the-kitces-report/ from almost a decade ago on using Shiller CAPE to adjust safe withdrawal rate recommendations. 🙂

– Michael

Further neat chart from someone whose work you’re familiar with.

https://uploads.disquscdn.com/images/efc4e12b31f250acc50cc63eedd7cf67a5c92bf27caa25bdf984f3c4271e0f74.jpg

Yup, love how Wade extended my original Shiller PE + SWR research in 2008 (https://www.kitces.com/may-2008-issue-of-the-kitces-report/) with the incorporation of bond yields as well. He also did some interesting extensions with dividend yields, too!

– Michael

Thanks Michael. That link is broken though . . .

Sorry, fixed now. Disqus included the closing parenthesis as part of the URL by mistake! Oy! 🙂

Thanks

Thanks for the hard work! Fantastic ways of showing the data. This one pairs well with Derek’s “Wrong Side of Maybe” article.

A number of math folks talking about this blog on bogleheads https://www.bogleheads.org/forum/viewtopic.php?f=10&t=222695&sid=ef201ab72d1a8e81c7c437132cfc0c29

Very interesting and worthwhile post, Derek.

A very interesting study. I think the moral here is that we shouldn’t expect these models to predict the range of outcomes with much confidence. We are taking historical economic data that is influenced by countless sources including tough decisions by the Federal Reserve and compressing that into two numbers (mean and variance). The author has some useful guidance on how the Monte Carlo outcomes appear to deviate from the historical record that is being modeled. Every simulation result should be interpreted with a grain of salt in the context of how reliable the model is.

There is a tendency for modelers to fall in love with their models and expect more than is reasonable from them. The statistician George Box put it best: “all models are wrong, but some are useful.”

“There is a tendency for modelers to fall in love with their models and expect more than is reasonable from them.”

Well said sir!

Interesting article. Jim Otar discussed the three key constraints inherent in Monte Carlo simulation in this paper (http://www.retirementoptimizer.com/articles/mcimca0001.PDF) back in 2007. Also, to accommodate the “fat tail” distribution model that historical market behavior exhibits, he proposed a two-layer Monte Carlo simulator which minimizes (or even eliminates) the three key constraints mentioned above and more closely resembles real-world market history.

In my view, Derek makes an excellent point in this article. Stock behavior from one year to the next “are interrelated”. To assume otherwise (as MC analysis does, especially in the outlier worst or best case scenario’s) is just not representative of the reality of how markets work.

Having said that, I am all for being conservative with my annual withdrawal % (I am with mine). It is sgtill good to remind ourselves that a WD strategy which survives MC simulations >90%or 95% of the time is essentially bulletproof.

I wonder how much it would change if you did 30 year periods for using monthly or weekly values. So we can look at Jan 1 1970 to Dec 31 1999 but then we could also look at Feb 1st 1970 to Jan 31 2000. Maybe that would change the results a bit.

Holy Crap! don’t know how I missed this article for the last month. Derek, my friend, you are the man! No other way around it.

Been reading a lot of Taleb and Mandelbrot and others and my brain is heavy with all this. Your article is crisp and for a professional layperson, if you will, easy to follow. So kudos.

I very much get the issue that the US in the 20th century was the exception and not the rule and there is no guarantee that the 21st will be similar. I’ve been thinking about that a lot actually. But what is an advisor supposed to do? Plan for the unpredictable and certainly unforeseen?

So, I will continue to use the year by year approach to planning and adjust accordingly with belt tightening if the year preceding was down.

Anyway keep it up Derek. This is great stuff.

Great work. A few thoughts:

– I would argue that monthly volatility can’t be totally dismissed. Actual retirees may not have the flexibility to fund their cash needs on a yearly basis and therefore they may have to take money out the worst possible time.

– I’ve often thought that Monte Carlo based modeling could/should eliminate trials which have compounded returns higher or lower than historical returns (or bands entered by the advisory). Based on your work, this might not be the best way to eliminate the outlier trials. Instead, we might have to try and eliminate trials that have 10 year (or 15 year) periods of significant divergence from market reality.

– I totally support your conclusion that MCS software need to be improved to “allow a combination – such as reduced real returns for 10 years, followed by normalized returns with mean reversion.” After all, we are trying to model a potential reality, not a mathematical hypothesis.

– Speaking of software, I like to use my soapbox to encourage MGP to improve their state tax calculations.

Jay

Keep in mind that one can run Monte Carlo analytics using distributions other than the normal to account for fat tails. An MC with a Student’s t distribution, for example. Another path is to use so-called extreme vaue theory (EVT) for modeling/forecasting tails. Here’s an example I wrote about with some basic modeling in R:

http://www.capitalspectator.com/tail-risk-analysis-in-r-part-ii-extreme-value-theory/

Does ‘bootstrapping” sampling have the same drawbacks as parametric monte carlo? If you allow samples sizes up to 5 year stretches, you’d account for at least medium term “return to mean” and other cycles.